AI Overview

| Category | Summary |

| Topic | AI Video Localization Workflow and Complexity for Enterprise |

| Purpose | To educate enterprise decision‑makers on the technical and workflow complexities of AI voiceover and lip‑sync and to define the essential decisions required for production‑grade quality at scale. |

| Key Insight | The biggest source of quality issues and overruns is misaligned expectations, not the technology itself. A strict, four‑stage workflow is essential, prioritizing upfront decisions like the Accuracy vs. Timing hierarchy. |

| Best Use Case | Enterprise teams planning to localize training materials, product demos, or executive communications into multiple languages using AI tools. |

| Risk Warning | Changes to the script after a lip‑synced video is rendered is an expensive, full re‑rendering process. Deferring key decisions (e.g., target locale, accuracy priority) guarantees budget overruns. |

| Pro Tip | Structure the approval process around the “Golden Document” (approved script/timing) and the “Single‑Point Revision” stage to front‑load decision‑making and minimize costly post‑render changes. |

The technology has matured. The workflows have not. Here is how production-grade AI voiceover and lip-sync really works and what it takes to get it right at scale.

Enterprise video content is expanding across languages faster than ever. Market pressure to localize training materials, product demos, executive communications, and marketing assets into multiple languages simultaneously has made AI-assisted voiceover and lip-sync an increasingly attractive solution. It is faster than traditional dubbing, more cost-effective at scale, and when executed correctly it serves the desired purpose very well.

But “when executed correctly” is doing a lot of work in that sentence.

At 1-Stop Asia, our production team released this service for our clients in the last quarter of last year. Since then we followed carefully our incoming projects and now after a hefty number of them we can make a clear outline on what’s working and what’s not.

What we have learned is that the biggest source of budget overruns, missed deadlines, and quality issues in AI video localization is not the technology itself. It is the misaligned expectations about how the technology actually works. This article is our attempt to close that gap.

The Hidden Complexity of AI Video Localization

Most enterprise clients approach AI video localization with an intuition shaped by simpler digital tools: you put content in, you get a translated/localized version out, and if something needs changing, you change it. That mental model is understandable but it does not map onto how AI video production actually works.

The Language Expansion Problem

One of the most consistent technical challenges our team encounters is what we internally call the expansion factor. A well-structured English script, when translated into French, Spanish, German, or many other European languages, will typically run 20 to 30 percent longer. The meaning is preserved, but the phrasing requires more syllables, more words, and more breath.

In a traditional dubbed video, this is addressed through loose translation e.g. by adapting phrases rather than translating them word-for-word to fit the timing.

AI systems can do the same, but only if they are given clear direction. If an AI voice model is simply handed a full literal translation and told to fit it into the original timing, the result is an unnaturally accelerated delivery that undermines the professionalism of the content entirely. Getting this right requires a deliberate choice at the outset: do you prioritize translation accuracy, or timing fidelity? Both are achievable but not simultaneously, and not without a clear brief.

What we’ve seen so far: projects come as unstructured raw files with strict time-stamping requirements. We perform an invisible to the client intensive linguistic “compression” phase prior to synthesis. This is needed in order to get a result that sounds natural and retains the speaker’s original cadence. That is the way to deliver a product that feels authentic to the target audience.

How to fix this: This can be removed from the process, if the source script is analyzed and pre-edited via “transcreation” rather than literal translation. By defining “Time-Sync vs. Accuracy” priorities during the briefing phase, we can ensure the translated phrasing is built to fit the audio window without forcing the AI into an accelerated, robotic delivery.

Lip-Sync Is Not an Overlay

For video content where the speaker is visible on screen, lip-sync synchronization adds a significant layer of computational and creative complexity. The AI is not simply placing audio over existing footage. It is re-rendering facial movement to match new phoneme patterns. This is a process that, depending on the footage and the language, can require substantial processing time and careful quality review.

The practical implication is important: once a lip-synced video is rendered, any subsequent change to the script (this usually on client side is just a single word) requires re-rendering the affected scenes from scratch. This is not a workflow inefficiency but rather how the architecture of the technology works in reality. Enterprise teams accustomed to iterative review cycles on standard content need to build their approval process with this constraint in mind.

How to fix this: Implement a “Locked Script” policy prior to the final rendering phase. We suggest a two-stage approval process:

- A final sign-off on the translated audio file alone;

- Commencement of the visual lip-sync rendering only after that audio is 100% confirmed.

This protocol allows us to achieve the goal of a high-fidelity, visually seamless localized video delivered on schedule and within budget and reduce additional rendering and minimize technical overhead.

Emotional Register and AI Coherence

AI voice synthesis performs best when it has full contextual awareness of the content it is delivering. When scripts are fed to the system in isolated sentences or fragments, which is a common approach in traditional subtitle workflows, the AI loses the paragraph-level context it needs to modulate tone, pacing, and emotional register coherently. The output can feel flat, or worse, tonally inconsistent across a single scene.

Our production team addresses this by structuring scripts as complete contextual units before any voice synthesis begins, and by building in a dedicated tone review step before final rendering.

What Needs to Be Decided Before Production Begins

In our experience, the projects that run smoothly are those where key decisions are made and documented before the production team touches a single frame of video. The projects that run over budget or over schedule are almost always those where these decisions were deferred, assumed, or left ambiguous.

Before any AI video localization project proceeds to production, the following questions need clear answers:

- Target locale specificity: French for France or French for Canada? Spanish for Spain or for Latin America? These are not interchangeable. AI voice selection, pronunciation models, and cultural adaptation all depend on knowing the precise target locale from the start.

- Voice persona and tone: What is the desired register? A professional corporate narrator, a warm instructional guide, an energetic consumer-facing presenter? The closer this brief is defined upfront, the less room there is for misalignment at review.

- Voice cloning vs. stock AI persona: If the original video features a recognizable company spokesperson or executive, cloning that voice for localized versions often makes sense for brand consistency. If the original speaker is not brand-significant, a high-quality stock AI persona is typically faster and more cost-effective.

- Priority hierarchy accuracy vs. timing: This is the most consequential decision in the brief. Time-constrained adaptation (adapting the translation to fit the original timing) typically delivers a better viewer experience. Literal translation prioritizes linguistic precision. Both are valid — but the choice must be made before scripting begins, not during review.

- On-screen text and proper nouns: Product names, brand terminology, and specialized vocabulary require specific pronunciation guidance for AI voice models. These should be identified and flagged in the brief, particularly for technical, pharmaceutical, or highly specialized content.

How a Production-Grade Workflow Protects Quality and Budget

The discipline of AI video localization is, at its core, a sequencing problem. Get the sequence right, and the technology delivers on its promise. Compress or skip steps to save time, and you will spend more time (and money) fixing downstream problems.

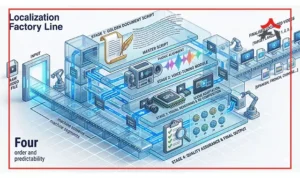

Our production workflow is structured around four stages designed to front-load decision-making and protect the integrity of the final render:

Stage 1: The Golden Document

Before any video processing begins, we produce a time-coded script document that maps the adapted translation directly against the original video timeline.

The client reviews and approves this document (not the video), just the script and timing before production proceeds. This single step eliminates the majority of expensive post-render revision requests. It is the lowest-cost point at which changes can be made, and it builds shared accountability between the client and our team for what the final output will contain.

Stage 2: Single-Point Revision

Our standard production rate includes one round of consolidated feedback for minor adjustments following initial rendering. We are deliberate about this structure because it encourages clients to review carefully and provide complete feedback in a single pass, rather than trickling in individual comments over time, which is a pattern that is both inefficient and technically disruptive in AI video production.

Stage 3: Post-Render Change Management

Any change to the script after a video has been rendered — regardless of how minor it may appear — triggers a re-rendering process. We treat these as a distinct production activity, billed separately from the original scope, because they are. This is not a punitive policy; it is an accurate reflection of the computational and labor cost involved. Enterprise clients who understand this structure plan their review processes accordingly and, in our experience, rarely need to use it.

Stage 4: Delivery Tiers

Standard delivery runs on a three-to-five business day cycle, which accommodates thorough quality review at each stage. For time-sensitive projects, express delivery within 24 to 48 hours is available with appropriate resource allocation. Both tiers deliver the same quality standard but the difference is in scheduling priority and resource intensity.

A Note on Working with Our Production Team

The production team at 1-Stop Asia works most effectively as a partner in the brief-development process, not just an executor of instructions. The questions we ask at the outset of a project are not procedural formalities — they are the inputs that determine whether the AI systems we work with can deliver results worth showing to your audience.

If you are evaluating AI video localization for the first time, or if you have had inconsistent results with other providers, we would encourage you to bring our team into the conversation before your content is finalized. The decisions that matter most in AI video localization are almost always made (or missed) before production begins.

We work across a broad range of content types, industries, and language combinations. What we bring to every project is a production discipline built on understanding where the technology works flawlessly, where it requires careful management, and where human expertise remains essential.

1-Stop Asia Production Team

For production inquiries, contact us at www.1stopasia.com