AI Overview

| Category | Summary |

| Topic | Enterprise procurement standards for localization vendors under new AI regulation (“The AI Prohibition Clause”). |

| Purpose | To inform localization vendors and enterprise procurement teams about the specific contractual and technical requirements coming into effect in 2026 due to AI regulation. |

| Key Insight | The “AI prohibition clause” will mandate strict controls on how Language Service Providers (LSPs) use Generative AI, focusing on data security, IP, and transparency, fundamentally changing vendor selection criteria. |

| Best Use Case | Localization vendors updating their compliance standards; Enterprise procurement teams drafting 2026 vendor contracts. |

| Risk Warning | Non-compliance with the AI prohibition clause will lead to contract termination and significant legal penalties for data breaches or IP infringement. |

| Pro Tip | Start auditing all internal AI/MT workflows immediately to ensure data segregation and clear accountability to meet the new 2026 procurement baseline. |

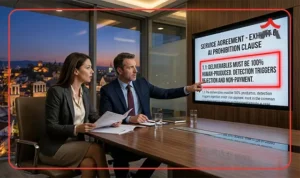

AI prohibition is not coming to localization procurement. It arrived. It is already embedded in enterprise contracts, with payment cancellation as the stated consequence for non-compliance. Failure to comply carries direct commercial penalties, as this requirement is now a formal provision of the contract rather than a mere internal guideline.

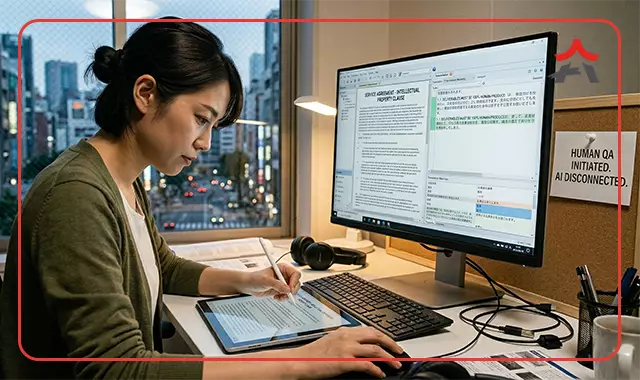

I’ve witnessed this shift firsthand in production operations, moving beyond what any survey summary can capture. Twelve months ago, AI usage was still being framed as a controllable variable. Today, the instruction is explicit: deliverables must be human-produced, and non-compliance triggers immediate rejection and non-payment. The conversation has moved upstream into procurement, but the operational burden sits downstream with the vendors actually producing the work.

This article is about that gap: what the clause requires, where compliance currently breaks, and what vendor managers need to put in place to verify it.

What the clause actually says and what it doesn’t

The AI prohibition clause, documented in the 1-StopAsia State of Language Operations Outsourcing 2025/2026 Report, is now more than a preference for human translation. It is a requirement with defined enforcement. Across multiple sectors, including regulated environments like Life Sciences, FinTech, and Legal, the clause appears with three consistent elements.

First, contractors are required to provide written confirmation that deliverables are human-produced. Second, unauthorized AI usage triggers immediate rejection of the deliverable. This is not discretionary. If AI involvement is detected or suspected, the output is treated as non-compliant regardless of quality. Third, and most critically, payment cancellation follows rejection. The financial consequence is explicit. Work completed using AI tools in violation of the clause is not billable.

The scope of this requirement is also unambiguous. It applies across all language pairs, content types, and project volumes. There are no carve-outs for “low-stakes” content or high-volume production. Whether the task is regulatory documentation or user interface strings, the standard is the same: human production.

What the clause does not specify is how this requirement is verified beyond the first tier of the supply chain. Enterprise clients contract with language service providers (LSPs). The clause binds the LSP, but the actual production, particularly for specialized areas like Asian language translation, is often executed by subcontractors operating at tier two. The clause states what must be delivered. It does not define how the LSP ensures that the subcontractor complied.

Why self-certification is not compliance

In practice, AI regulation in translation workflows currently operates across three levels of assurance. Understanding the difference between them is essential because the market is moving quickly from the weakest to the strongest, and most frameworks have not caught up.

Level 1 – Written confirmation

This is the baseline required by most contracts today. A contractor confirms in writing that no AI was used in the production of deliverables. It satisfies the formal requirement of the clause. It is also the weakest form of assurance because a written statement is not verifiable on its own. A linguist or subcontractor who used AI tools can still sign a human-production confirmation. There is no inherent mechanism in the statement itself that proves compliance. It is a declaration, not evidence.

Most LSP vendor agreements currently operate at this level. It is administratively simple and contractually clean. It is also insufficient, given the enforcement mechanism attached to the clause.

Level 2 – Process documentation

At this level, the vendor provides structured documentation of their production workflow that makes AI usage structurally improbable.

This includes elements such as ISO 17100 certification, documented in-house linguist employment models, translation memory (TM) and QA logs, and platform-level access controls that restrict the use of unauthorized tools. The idea is not to prove that AI was not used in a specific instance, but to demonstrate that the production environment itself limits or prevents AI substitution.

This level of assurance is significantly stronger. It introduces friction against non-compliant behavior and provides a defensible position if compliance is questioned.

Level 3 – Auditable project-level evidence

This is where AI compliance moves from policy to verifiable fact. At this level, the vendor can provide project-specific evidence of human production on request. This includes linguist assignment records, QA run logs, segment-level edit histories, and traceable activity within production platforms. It allows an LSP and, by extension, its enterprise client, to audit a specific deliverable and confirm how it was produced.

This is the standard expected in regulated sectors. It aligns with broader compliance practices where process transparency and traceability are required, not assumed.

The gap is clear. Enterprise clients issuing AI prohibition clauses are operating with an expectation of Level 2 or Level 3 assurance. Most LSP subcontractor evaluation frameworks are still anchored at Level 1.

Rather than a lack of diligence, we are facing a challenge of timing. The shift from AI as an operational variable to AI as a contractual risk happened within a single twelve-month window. Evaluation frameworks built eighteen months ago were not designed for this requirement. But the contracts have already moved. The enforcement mechanism is already in place.

What the evaluation framework should require

Closing this gap requires translating the three levels of assurance into concrete procurement criteria: what you ask, what you require, and what you contract.

Contractual requirements

Start with the contract itself. The AI regulation must be specified at the production level, not just at the LSP level. This means extending the clause explicitly to subcontractors and production partners.

Start with the contract itself. The AI regulation must be specified at the production level, not just at the LSP level. This means extending the clause explicitly to subcontractors and production partners.

The agreement should include:

- A written confirmation requirement tied to each deliverable

- A defined rejection mechanism for non-compliance

- A clear payment consequence aligned with the enterprise contract

If the clause stops at the LSP boundary, the compliance chain is already broken.

Process verification

Next, assess whether the vendor can document a production workflow that supports human translation as a structural standard.

Key questions include:

- Is the workflow aligned with recognized standards such as ISO 17100?

- Are linguists employed in-house or managed within controlled vendor pools?

- What controls exist at the platform level to restrict unauthorized tool usage?

- Can the vendor provide TM and QA logs that reflect human-driven processes?

The goal here is to reduce the probability of non-compliance to a defensible level.

Audit capability

This is the area where most evaluation frameworks are currently weakest and where they need to evolve. You should require that vendors can provide project-level evidence of human production on request. This includes:

- Linguist assignment records for each project

- QA logs showing human review stages

- Segment-level edit histories within CAT tools or production platforms

Just as important is the operational aspect:

- Is this evidence available within a defined SLA?

- Is it standardized across projects or assembled ad hoc when requested?

If a vendor cannot produce this evidence within a reasonable timeframe, the audit capability is not operational.

Scope consistency

The AI prohibition framework must apply consistently across all work types. Any carve-outs introduce risk. Evaluate whether the vendor’s policy applies:

- Across all language pairs, including complex areas like Asian language translation

- Across all content types, from regulated documentation to high-volume content

- Across all project sizes

A “partial” prohibition is not aligned with how enterprise contracts are written. The requirement is uniform.

Toolchain coverage

Finally, extend the evaluation beyond final translation. AI usage can occur at multiple stages of the production chain:

- Pre-processing and content preparation

- Terminology research

- QA and validation steps

The vendor’s compliance framework should explicitly address all stages. Limiting the prohibition to final translation output leaves gaps elsewhere in the workflow.

Closing: the gap is already operational

The shift from AI as an emerging concern to AI as a contractual standard with payment consequences happened within twelve months. That speed matters because procurement frameworks did not evolve at the same pace.

What was sufficient eighteen months ago: written confirmation, basic vendor vetting, trust in process, is no longer aligned with the contracts being signed today. The requirement now is not just to state compliance, but to verify it across the full supply chain.

For LSP vendor managers and operations directors, this is not a future-proofing exercise. The exposure already exists wherever production is outsourced without auditable controls. Every project delivered under an AI prohibition clause carries the same question: Can you prove how this was produced if asked?

Some organizations already have the framework to answer that. Many are still operating on trust at tier two.